There are two ways to build vertical AI startups: copilots and autopilots. A copilot sells a tool; an autopilot sells the work itself. This idea was first introduced in Sarah Tavel’s sell work, not software in 2023, but in 2026 has become increasingly relevant. As Julian Bek put it in services become the new software, “the next $1T company will be a software company masquerading as a services firm.”

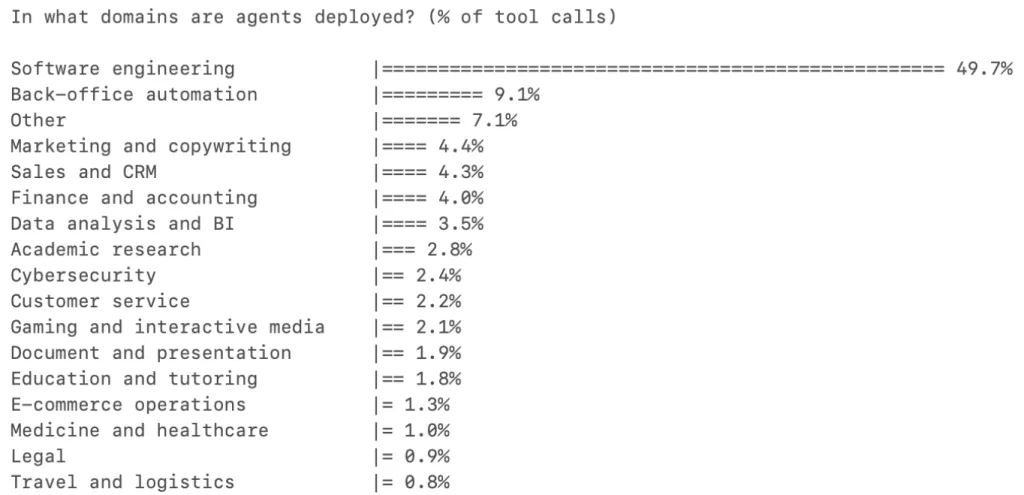

Which verticals are ready for autopilots today, and which are still copilot territory? Most verticals today, aside from coding, have little AI usage:

Most interesting verticals don’t have significant AI agents yet. Coding copilots and autopilots are the exception.

Most interesting verticals don’t have significant AI agents yet. Coding copilots and autopilots are the exception.

The “services are the new software” essay distinguishes between tasks that require intelligence vs judgement. But it notes that ultimately those tasks will converge as human judgement from copilots generates training data for autopilots.

An alternative framing is short vs long feedback loops. AI verticals with short, self-correcting feedback loops (did the invoice get paid? did the code compile and pass tests?) are natural autopilots. Verticals with long, dangerous, or regulated feedback loops can become autopilots with care and some specialized design, and may ultimately be very durable companies.

Let’s go through some examples of autopilots vs copilots in the major AI verticals beyond coding: legal AI, finance AI, and healthcare AI.

Legal AI: copilots have scaled to $10B companies, autopilots are emerging.

Legal is one of the fastest-growing verticals in AI. Harvey and Legora are legal copilots. They helps lawyers draft and review contracts, but the attorney remains responsible for the final work product. They’ve both grown extremely quickly, with and Legora going from 4M to $23M ARR in a year. Harvey has raised at an $11B valuation and Legora has hit $5.55B (as of March 2026). Crosby or EvenUp are the poster children for the “sell work, not software” thesis.

The distinction tracks the feedback-loop line. Assembling a demand package from medical records has a short, verifiable feedback loop—either the records are accurately summarized or they aren’t, and errors are caught before filing. Advising a client on litigation strategy has a long, high-stakes feedback loop—you don’t find out if you were right until months or years later, and the cost of being wrong is catastrophic.

The pattern: administrative legal work is going autopilot; advisory and courtroom work stays copilot.

Finance: from bookkeeping to advisory

AI applied to finance follows a similar split to legal. Back-office transaction processing is going autopilot, whereas forward-looking advisory stays copilot:

- Autopilot territory: Pilot sells completed bookkeeping—categorized transactions, reconciled accounts, monthly closes—priced against the outsourced bookkeeper, not as a per-seat SaaS fee. These tasks are high-volume, rule-governed, and already widely outsourced to BPO firms, which is exactly the profile Tavel’s “outsourced labor” test predicts.

- Copilot territory: Accrual augments accounting firms with AI-powered workspaces for tax and assurance—“preparation time has dropped by more than 85 percent”—but the accountant stays in the loop, reviewing every output. Cos (Vinay Iyengar’s new startup) does the same for financial planning and analysis. FP&A has long feedback loops—you build a forecast, present it to the board, and learn whether your assumptions were right a quarter or a year later. The cost of a wrong assumption compounds silently. A human CFO still owns the outcome; the AI accelerates the modeling.

Healthcare: administrative vs care delivery, copilot vs autopilot.

Healthcare has the largest addressable market for both copilots and autopilots, but it’s the vertical where regulatory frameworks matters most.

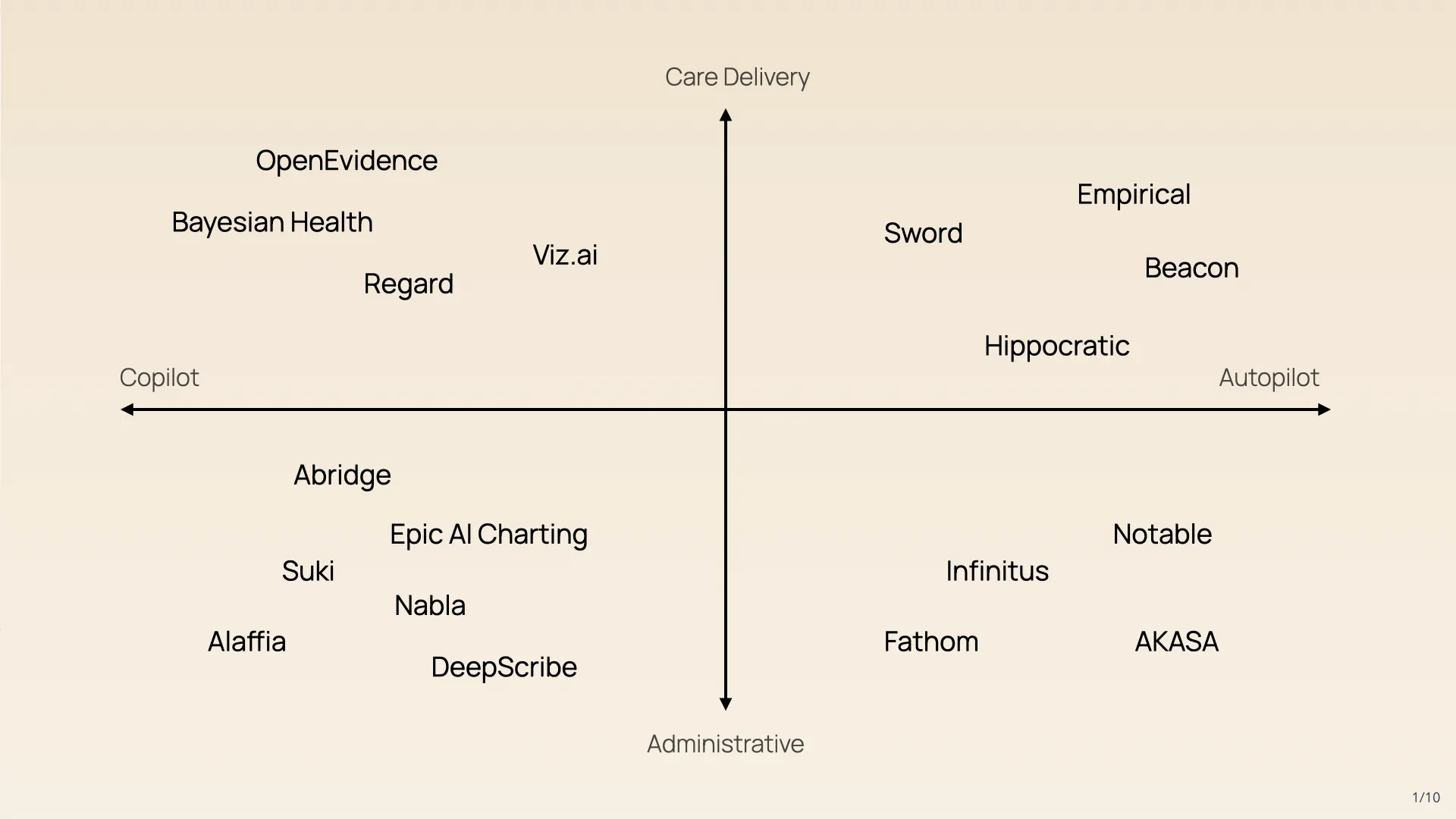

The two axes that matter are administrative vs. care delivery and copilot vs. autopilot. You can ship an autopilot for legal demand packages without asking anyone’s permission. You cannot autonomously prescribe a statin without a regulatory framework that says AI can do that.

Healthcare AI mapped on two axes. Administrative tasks (left) are already going autopilot. Clinical tasks (right) are moving from copilot to autopilot as regulation catches up.

Healthcare AI mapped on two axes. Administrative tasks (left) are already going autopilot. Clinical tasks (right) are moving from copilot to autopilot as regulation catches up.

Healthcare administration AI is moving from copilot to autopilot

The administrative quadrants are straightforward. Revenue-cycle management—coding, billing, prior authorization—is a $115B market that’s already heavily outsourced. Companies like Akasa and Olive AI have been automating claims processing for years. The feedback loops are short and verifiable: did the claim get paid? Was the code correct? CMS’s prior-authorization reforms (finalized in 2024) further reduce friction by requiring payers to respond to electronic prior-auth requests within 72 hours, making end-to-end automation more viable.

Ambient scribes like Abridge, Suki, Nabla are examples of copilots that largely help with administrative work (e.g., better clinical documentation leads to better billing). AKASA and Fathom are administrive autopilots.

Clinical copilots vs autopilots

The core verticals of care delivery are some of the largest markets in existence. Cardiovascular spend is roughly $600B per year, oncology is $250B in medicines alone, and metabolic disease is $130B+. These are not niche markets. They’ree enormous, growing, and ripe for disruption.

OpenEvidence is the most obvious clinical copilot, being used by 40% of doctors and hitting a $12B valuation. Health AI autopilots include Sword Health (physical therapy), Empirical (heart health), and Hippocratic (autonomous nurses). Each of these are starting with tasks that are sub-clinical in nature, with the obvious potential to expand to regulated areas (e.g., prescribing) as clear regulatory and reimbursement pathways emerge.

Regulatory and reimbursement shifts are unlocking care delivery autopilots

Clinical care has long feedback loops and life-or-death consequences. So there obviously needs to be some level of regulation. AI legal frameworks are catching up to the reality of generative AI very quickly:

Medicare ACCESS Model launches in July 2026 and explicitly pays for AI-delivered care. CMS’s new payment model provides monthly payments that are not tied to physician time, but rather. The payment amount has been explicitly set so that only companies using software can win.

ARPA-H is funding autonomous AI cardiology agents. These are intended to autonomously prescribe, titrate medication, offer dietary and nutritional advice, and escalate to emergency care. This is an explicit autopilot The FDA TEMPO program creates a regulatory pathway for adaptive AI that continuously learns and updates post-deployment. Traditional 510(k) clearance assumed a locked algorithm; TEMPO is designed for the kind of system an autopilot actually needs to be.

Utah’s AI Prescribing Pilot is the first state-level framework that shifts prescribing authority from “physician + AI copilot” to “AI autopilot” with supervision. Initially scoped to medication refills, it lets AI systems generate prescriptions with verification of the first N prescriptions for each medication, and then quality oversight based on sampling thereafter. If it works, other states will follow.

Together, these constitute a full-stack regulatory framework for healthcare autopilots: ACCESS provides the payment model, ARPA-H provides the R&D mandate, Utah provides the prescribing authority, and TEMPO provides the device-approval pathway. For the first time, a clinical AI autopilot has a plausible path from development through reimbursement.

How copilots and autopilots will converge

Copilots and autopilots will converge over time: “today’s judgement will become tomorrow’s intelligence.” The feedback loops that keep tasks in copilot territory get shorter as models improve, evidence accumulates, and regulators gain confidence. A task that requires a physician’s judgment today (say, adjusting a statin dose based on a lipid panel) may be fully automatable in two years—not because the AI got smarter, but because the feedback loop shortened: more outcomes data, clearer protocols, and a regulatory framework that permits autonomous action. The strategic implication is copilot companies should build toward autopilot from day one by capturing the data, earn the trust, prove the outcomes. Companies that build copilots without an autopilot roadmap risk getting disrupted by autopilot-native entrants who can deliver a better experience at a lower cost.

For healthcare specifically, this means the most valuable AI companies won’t just build good clinical models. They’ll build the regulatory, clinical-evidence, and trust infrastructure that lets those models operate autonomously. The model is necessary but not sufficient. The infrastructure is what makes it a business.

Get your free 30-day heart health guide

Evidence-based steps to optimize your heart health.